Social media parental control app saw 25% increase in self-harm, suicide alerts among teens in 2021

Nearly 75% of teenagers were involved in conversations or situations involving self-harm or suicide, the study found

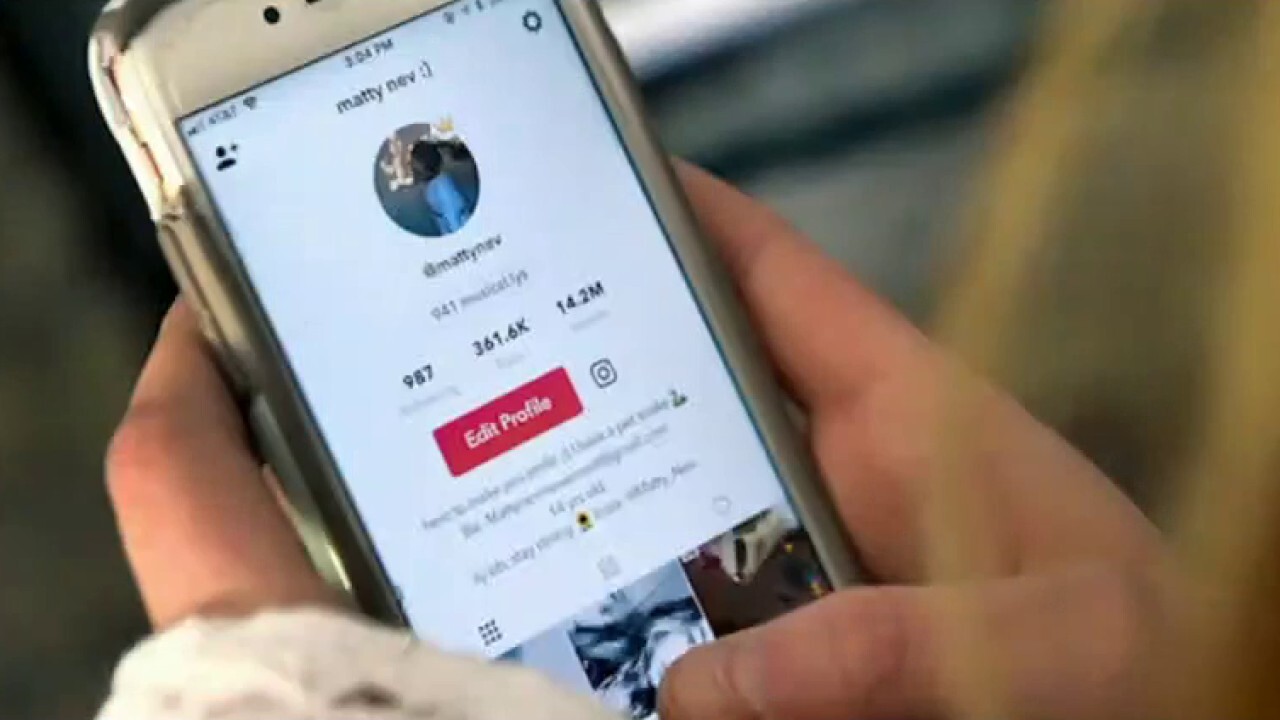

Social media platforms are impacting children's health

Dr. Katherine Kuhlman and Asra Nomani discuss the concerns social media platforms are raising for parents on 'Fox Business Tonight.'

Bark, a parental social media watchdog app, saw a 25% increase in self-harm and suicide alerts among young people between the ages of 12 and 18 in 2021, according to a report released Friday.

The app, which alerts parents when it "detects potential issues" in kids' text messages and app activity, analyzed more than 3.4 million messages across texts, emails and social media platforms in 2021 as the COVID-19 pandemic forced thousands of children across the U.S. to spend hours online every day.

"Per recent congressional hearings, we saw a great need for transparency around data, especially when it comes to minors and harmful content/people," Titania Jordan, chief parent officer and CMO of Bark, told FOX Business in a statement.

TIKTOK MODERATOR HAS PTSD FROM WATCHING ‘GRAPHIC’ CONTENT: LAWSUIT

"We have provided what we have access to in hopes to protect even more children. It's time for other tech companies to do the same. It's also time for big tech to let users truly own their data — instead of outrightly preventing parents from keeping their kids safer online."

More than 43% of young teenagers and nearly 75% of teenagers were involved in conversations or situations involving self-harm or suicide, Bark found in its survey of messages and social media activity. Bark did not specify the age range between "tweens" and "teenagers" in the report.

Early estimates for 2020 show more than 6,600 suicide deaths among U.S. youth, ages 10 to 24, according to the Centers for Disease Control and Prevention (CDC).

Emergency room visits for suicide attempts among adolescent girls, in particular, rose by 51% during the pandemic, and emergency room (ER) visits among adolescent boys increased by 4% during the same time period, CDC data shows.

INSTAGRAM TIGHTENS TEEN PROTECTION MEASURES AHEAD OF SENATE HEARING

"Given that suicide is the second-leading cause of death in children in this nation, this is an alarming trend, and we have to do more for our children (and faster)," Jordan said.

Alerts for anxiety were most often sent for 15-year-old children, Bark found. Additionally, 32% of young teenagers and 56% of teenagers engaged in conversations about depression.

About 25% of teenagers between the ages of 13 and 18 are affected by anxiety, according to the Anxiety and Depression Association of America. About one in four children globally experienced depression during the pandemic, researchers from the University of Calgary in Canada found.

The logos of social media applications WeChat, Twitter, MeWe, Telegram, Signal, Instagram, Facebook, Messenger and WhatsApp are displayed on the screen of an iPhone. (Photo illustration by Chesnot/Getty Images)

The vast majority of young teenagers (72%) and teenagers (85%) alike either witnessed or experienced online bullying, otherwise known as cyberbullying. The CDC found in 2019 that about one in four minors engaged in cyberbullying.

While alcohol use decreased in 2020 and further decreased in 2021, more than 75% of young teenagers and 93% of teenagers engaged in conversations surrounding drugs and/or alcohol, Bark found.

PARENTS WHO LOST 16-YEAR-OLD TO OVERDOSE START PETITION ASKING TIKTOK, SNAPCHAT TO CHANGE POLICIES

Jordan noted that children are using Snapchat to purchase drugs that are sometimes laced with harmful substances like fentanyl.

"The unfortunate and preventable deaths that have occurred as a result, we need to focus on tangible improvements there ASAP. Kids should not have access to drug dealers through a social media app," she said.

Nearly seven in 10 young teenagers and about nine in 10 teenagers encountered "nudity or content of a sexual nature" in their texts or on apps, according to the monitoring app. Additionally, 10% of young teenagers and 20% of teenagers experienced predatory behavior online.

Two girls with masks looking at their phones the day before the obligatory use of masks outdoors comes into force Dec. 23, 2021, in Madrid, Spain. (Eduardo Parra/Europa Press via Getty Images)

The National Center for Missing & Exploited Children recorded a 95.7% increase in online enticement reports in 2021 compared to 2020.

Apps that Bark ranked among the worst for severe sexual content, in order, were messaging app KiK; blogging app Tumblr, which recently added a sensitive content filter; face-to-face conversation app Houseparty; communication app Discord; and cloud storage app Google Dropbox.

"Given that close to 10% of tweens and 20% of teens encountered predatory behaviors from someone online, according to our data, we have to do more to protect our children from sexual abuse, whether it takes place in real life or virtually," Jordan said. "The ways in which a child can be abused online are rapidly outpacing the protections that currently exist to prevent that abuse."

Jordan called out social media companies and tech giants like Google and Apple for "turning a blind eye to this abuse and putting out announcements that lead people to believe their new regulations will actually solve these problems."

"Unfortunately, they have not shown the ability or willingness to adequately self-regulate, and so parents and legislators have to step up and stop this," she said. "We are still at a point where minors will surface child sexual abuse content — that involves them — to major platforms, and those platforms refuse to remove it unless pressured by law enforcement."

CLICK HERE TO READ MORE ON FOX BUSINESS

Apple and Alphabet Inc.’s Google have touted parental controls as a way for parents to keep tabs on their children’s technology use. Google offers a parental control called Family Link that allows parents to manage children's apps and screen time.

"We are committed to providing our users with powerful tools to manage their iOS devices and are always working to make them even better," an Apple spokeswoman previously told FOX Business.