TikTok, Snap, YouTube to face questions from Congress over platforms' impacts on teens, children

Hearing follows scathing testimony from Facebook whistleblower Frances Haugen

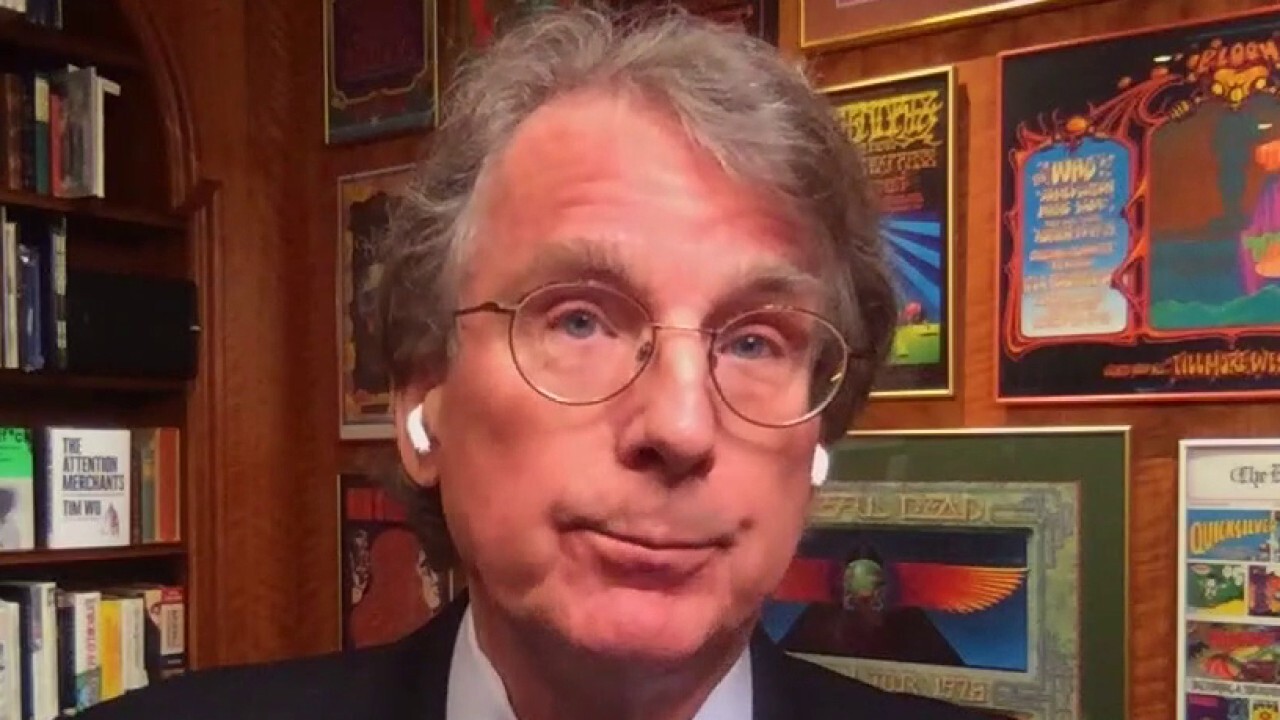

Facebook's algorithm promotes hate speech, disinformation, conspiracies: McNamee

Elevation Partners co-founder Roger McNamee argues the social media giant's value system conflicts with values of its users.

Though Facebook and Instagram have been at the center of controversy over social media's potentially harmful impact on the physical and mental well-being of teens and children, the scrutiny of them appears to be only the tip of the iceberg.

| Ticker | Security | Last | Change | Change % |

|---|---|---|---|---|

| SNAP | SNAP INC. | 6.08 | +0.10 | +1.67% |

| GOOGL | ALPHABET INC. | 400.80 | +2.81 | +0.71% |

On Tuesday morning, representatives from Snap, TikTok and YouTube will testify before the Senate Commerce, Science, and Transportation Subcommittee on Consumer Protection, Product Safety, and Data Security in a hearing entitled "Protecting Kids Online: Snapchat, TikTok, and YouTube."

FACEBOOK EMPLOYEES CLAIMED TACTICS WERE ‘HOSTILE’, ‘DISRESPECTFUL’ TOWARD USERS, DOCUMENT SHOWS

Sen. Marsha Blackburn, the ranking Republican on the committee, claims that the three tech giants "all play a leading role in exposing children to harmful content" but emphasized that TikTok is an "especially egregious offender, both because they make the personal information of all TikTok users available to the communist Chinese government, and because the app pushes sexually explicit and drug-related content onto children."

Sen. Marsha Blackburn, R-Tenn., speaks during a Senate Judiciary Committee hearing on Wednesday, Sept. 30, 2020, on Capitol Hill in Washington, D.C. (Photo by Stefani Reynolds-Pool/Getty Images) (Photo by Stefani Reynolds-Pool/Getty Images / Getty Images)

TikTok, which has surpassed 1 billion monthly active users around the globe, did not immediately return FOX Business' request for comment. However, the company has previously denied claims that its U.S. user data is shared with the Chinese government. TikTok will be represented at the hearing by the company's head of public policy for the Americas, Michael Beckerman.

Following leaks of Facebook's research related to Instagram's impact on teens' mental health, TikTok published informational guides on well-being and eating disorders and announced it would be expanding search interventions and rolling out opt-in viewing screens for sensitive content.

According to its latest transparency report, more than 81.5 million videos, including over 11.4 million in the U.S., were removed globally from the platform between April and June 2021 for violating its Community Guidelines or Terms of Service, which is less than 1% of all videos uploaded. Of those videos, TikTok identified and removed 93% within 24 hours of being posted, 94.1% before a user reported them, and 87.5% at zero views.

Approximately 41.3% of videos violated TikTok's policies on minor safety; 20.9% violated policies on illegal activities and regulated goods; 14% violated policies on nudity, sexually explicit content and pornography; 7.7% violated its policy on violent and graphic content; 6.8% violated its policy on harassment and bullying; and 5.3% violated its policy on suicide, self harm and dangerous acts.

TikTok offers creators the ability to appeal their videos' removal and will reinstate content that's been incorrectly removed. From April to June, more than 4.6 million videos were reinstated after being appealed.

GET FOX BUSINESS ON THE GO BY CLICKING HERE

YouTube's vice president of government affairs and public policy, Leslie Miller, is expected to testify Tuesday that the platform has "clear policies that prohibit content that exploits or endangers minors on YouTube" and that "significant time and resources" have been committed to remove violative content as quickly as possible, according to excerpts of prepared testimony obtained by FOX Business.

Between April and June, YouTube removed more than 1.8 million videos for violations of its child safety policies, approximately 85% of which were removed before they had 10 views.

While YouTube's rules prohibit individuals under the age of 13 from using the platform, children can view age-appropriate content from trusted partners through the YouTube Kids app, which was launched in 2015. The company also has a feature called Supervised Experiences, which allows preteens to view content under certain restrictions.

According to Miller's testimony, the company has removed more than 7 million accounts in 2021, including 3 million in the third quarter, that may have belonged to a user under the age of 13.

CLICK HERE TO READ MORE ON FOX BUSINESS

Though more than 80% of Snapchat's users are 18 or older, Snap's vice president of global public policy, Jennifer Stout, is expected to testify that the company has made "thoughtful and intentional choices to apply additional privacy and safety policies and design principles to help keep teenagers safe," including banning public profiles for users under 18, rolling out a feature to limit the discoverability of minors in its "Quick Add" friend suggestions and deploying "age-gating tools to prevent minors from viewing age-regulated content and ads," according to prepared remarks obtained by FOX Business.

Other features Stout is expected to highlight include the ability to turn off location sharing and a streamlined, in-app system that allows users to report concerning content or behaviors to its Trust and Safety teams. In addition, the company has implemented a new safeguard to prevent Snapchat users between the ages of 13 and 17 from updating their birthday to an age of 18 or above and is planning to give parents the ability to view their teens' friends, manage their privacy and location settings, and see who they're talking to.

According to Snap's latest transparency report, which covers July to December 2020, the company received over 10 million reports of alleged content violations and took action against more than 5.5 million pieces of content globally that were found to violate its community guidelines. Of the more than 5.5 million pieces of content, approximately 4.3 million (77.7%) violated policies on sexually explicit content; over 427,000 violated policies surrounding regulated goods, which includes illegal drugs, counterfeit goods and weapons (7.7%); over 337,000 violated policies on harmful or violent content; and over 238,000 (4.3%) violated policies related to harassment or bullying. Snapchat has over 500 million monthly active users across the globe.